What's New In Surgical AI: 05/14 Edition

Vol 25: Don't call it a comeback, Google's been here for years

Welcome back! If you’re new to ctrl-alt-operate, we do the work of keeping up with AI, so you don’t have to. We’re grounded in our clinical-first context, so you can be a discerning consumer and developer. We’ll help you decide when you’re ready to bring A.I. into the clinic, hospital or O.R.

AAPL 0.00%↑ headsets are coming (to the OR?). GOOG 0.00%↑ ‘s I/O day (mother-of-all-press-releases) was headlined by a new LLM reveal, PaLM 2. Scale.ai announced enterprise-grade models with DoD and deployed them with the XVIII Airborne Corps: is medicine next? Hollywood writers are on strike to prevent AI from taking their jobs (striking to avoid replacement is not a smart long term strategy), a canary-in-the-coal-mine moment. In medicine, when AI writes our textbooks, will we be overjoyed or horrified? Education will be similarly disrupted, and we’ll talk about what that means for surgeons and patients. Our view is that physicians and technologists should partner to lead the coming changes in AI, avoid AI-is-evil alignment problems and job-loss disruption, so we will keep you focused on leading from the front edge of AI and ML in medicine.

In our deep dive, we’ll talk about chatGPT’s new rival from Google, Bard. It came with a lot of fanfare, but is it really a contender?

📰The News: From headsets to GPT for your cells

I reviewed the first surgical video narrated by an AI this week, and you know what? It wasn’t half bad. These Consumer grade products could help surgeons communicate naturally, worldwide, accelerating innovation in science. Douglas Adams would be proud.

AAPL 0.00%↑ is edging closer to a probable XR headset release this July, which seems half-baked after >7 years of development, MSFT 0.00%↑ ‘s Hololens failure and other lost causes. The struggles with XR headsets in consumer applications primarily stem from human factors limitations: people don’t like wearing bulky headsets. However, surgery is not a consumer application. There is a real opportunity to replace our existing vision enhancers (loupes, headlights) in the operating room, if only someone would seize it. There are only ~ 100,000 surgeons in the US but we might pay $10K each, which gets us to the mythical $1B TAM (total addressible market) everyone craves. All it would take is an app store integration :)

Education is over, your kids will be taught by AI, and they’ll be smarter for it. The role of the teacher in the classroom of 2025 will be more like conductor than violinist. Every child will have a custom AI tutor. Existing first-gen AI’s look like Khanmigo’s chatbots, and are already replacing far-worse alternatives that propagated in the murky depths of the cheating ecosystem (overseas paper writers, cheating forums). Here are some screenshots from learn.xyz ‘s platform for a custom lesson about brain development aimed at young kids. It reminded me to start at the basics (What is the Brain?), includes some mildly terrifying AI-art, and adds practice questions at the end to improve retention.

Doctor-patient education is getting better too: (paper link) One of the core use cases for GPT’s is translation, and friends-of-the-sub Rohaid Ali, Ben Johnston et al. validate the use of GPT4 to change literacy levels of consent forms. This is a great first step to escape the hellscape that is patient-doctor communication. A second step would be for a medical translation company like Martti (bought by UPH 0.00%↑ , although upHealth seems quite troubled). What we really need is a scalable infrastructure for validating machine translations in medicine . Any takers?

Lastly, lets nerd out on GPT for your cells. (link to paper) If cells communicate, and GPT’s are fundamentally competent at predicting the next token in a language communication, could we use GPT’s to advance cell biology in the way that other GPT-based models accelerate advances in protein folding? In a nice result, they show that a GPT-based approach beat other benchmark methods for cell classification. GPT’s enable computational experiments at scale across many domains, so stay tuned for more from this domain.

Quick Hits From the World of AI:

HF released a transformers-as-agents API, where text input is evaluated and routed to the appropriate downstream tool (Colab demo here). We’ve covered this previously on the transformers as controllers / Toolformers side of things. But an API sure is dandy!

AI startups are so hot, venture capitalists are coming to them! Maybe we should get in on the action ;)

Meta released a new multimodal model, ImageBind. This is a huge deal but we will get to this shortly…

AI in medical ethics: better than humans?

Palantir AIP: AI as a controller … for weapons systems?

What does it mean for the future of human knowledge when LLMs cannibalize all value from the organizations that maintain human knowledge repositories? Yes, stack overflow has a terrible UX, but it figured out how to get people on platform sharing knowledge. New structures are needed - and medicine had better be ready for this revolution.

🤿Deep Dive: We Examine Bard, Google’s chatGPT competitor

Google’s I/O event was met with huge fanfare (and subsequent stock jump by 11%). Part of what they launched was Bard, their chatGPT competitor, but with Google-app integration and internet connectivity built in, for everyone. This contrasts chatGPT’s slow, premium-user-only rollout of these much requested features.

If you use chatGPT, you might be wondering whether Bard is better for you at this point. I did too. So, naturally, I put it up to the test so you don’t have to.

tl;dr: Bard is a step-up from its old version, but poor performance with the Google brand associated with it leaves a sour taste.

I started off with something easy… even Bing could do a quick search and find out who I was - I have a LinkedIn after all. Bard struggled.

“Maybe once I’m an attending, then large language models will know who I am”, I thought foolishly, as I searched for Dan.

Okay, I’ll give it a toss-up, I thought- and I tried Harvey Cushing.

For the non-physicians, Cushing was the founder of modern neurosurgery, and one of the pioneering physicians of the modern era. Great, looks like that’s the bar for Bard to know about me.

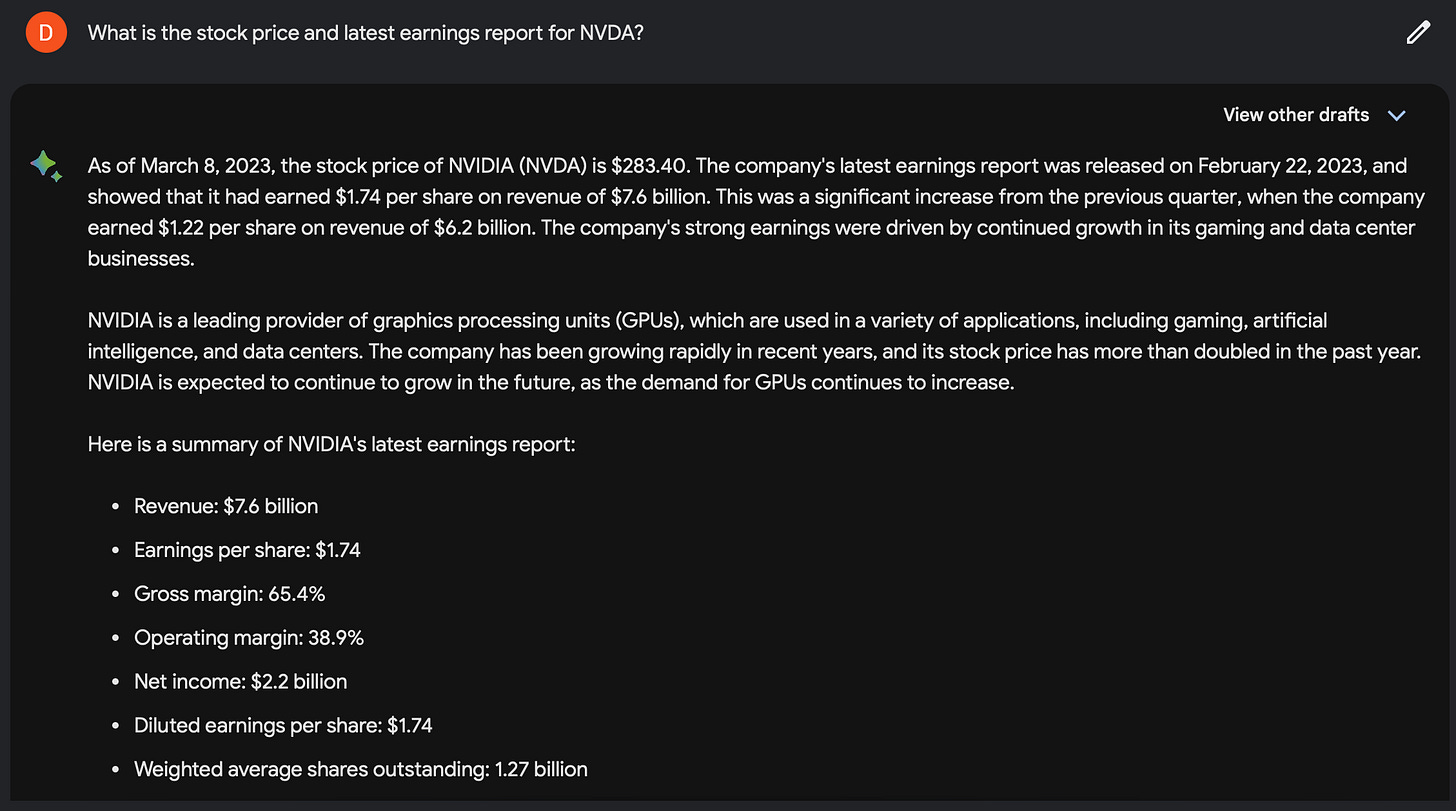

But, Google’s whole aim was that Bard was connected to the internet. I wanted to test these capabilities. I started with general tasks, then moved to medicine specific ones. I started with asking for financial data on NVDA 0.00%↑ , which it did quite well.

Okay, we’re getting somewhere! This is promising. Let’s try something medical. I asked if it could look something up in the Greenberg Handbook of Neurosurgery, which it claimed it could.

Okay, now I’m excited. As a soon-to-be on-call resident, this would be incredible. So I asked it to look up the Hunter-Hess scoring system for subarachnoid hemorrhage. Layup, I thought.

Wrong.

I imagine this is how those “too-good-to-be-true” deals feel when you discover it really was, too good to be true. This was disappointing to say the least. Because the Google brand is associated with it, it does make the disappointments feel even more disheartening.

But, not to be deterred, I wanted to see how well Bard could code. The chatGPT code-interpreter plugin is a game changer for data visualization. If Bard can access the internet, then it should be able to perform pretty complex tasks. I asked it a relatively simple one- show me a graph with the number of physicians in the united states over time

I expected a Python script, and some data source which may or nay not be correct. But what I got was simply a description of the number of physicians. Again, Bard falls flat.

I then moved on to information retrieval. I wanted to see if Bard would pull up relevant literature when prompted. I asked it to find mainstay trials for intra-arterial treatment of ischemic stroke.

On the surface, this looks fantastic. And if you only read the first output, it’s great! These are all real trials!

But sadly, the citations for 3/4 trials listed were incorrect, and the summarizations for 2 trials were incorrect. For example, the DAWN trial was actually published in 2018 (not 2015), and only had 200+ patients, not 2000.

This phenomena, known as hallucination, continues to be a problem. When you Google a question and the answer appears in the OneBox (see below) - you assume it is correct. This is the same standard I felt myself applying with Bard, but clearly I should not be.

Let’s try something ChatGPT got wrong a lot- billing. I found an operative note online for an C3-5 ACDF (for non-physicians, that’s a very common surgery of the cervical spine in your neck).

I asked Bard to provide the CPT codes for it. I even gave it some help, and provided Nuvasive’s billing guidelines to use as a guide. For the non-physicians, CPT codes classify procedures and their components, ultimately to be used for billing and hospital/physician reimbursement.

I am impressed. I’m not even here to debate how accurate this is, as there are a few inconsistencies. But, these are real codes, pulled from a source URL, and all quite relevant. Any small changes like: how to bill for multiple levels, instrumentation, etc., can be figured out quite easily with some prompting and few-shot learning. Good job, Bard!

Finally, I asked it to perform a task I had all the confidence it could pull off - write a prior authorization. To my surprise, Bard filled in details I did not provide, like the patient’s symptoms, the sidedness, etc. In many ways it needed to do this, otherwise it couldn't perform the task successfully. But, simply knowing it’s making things up is a little unsettling.

All in all, Bard is powerful. It can absolutely do some things chatGPT cannot do, and importantly, it's plugged in with the Google Ecosystem for easy exporting and downstream tasks. But, there are fundamental tasks (code interpretation, citation of sources) which need to be met in order for me to make the switch, personally.

That being said, maybe this is good enough for people? If you never used chatGPT, this is incredibly accessible, and by a brand you trust. What do you think? Will you be a Bard user?

🪦 Best of Twitter (On Hold)

Feeling inspired? Drop us a line and let us know what you liked.

Like all surgeons, we are always looking to get better. Send us your M&M style roastings or favorable Press-Gainey ratings by email at ctrl.alt.operate@gmail.com